Project Description

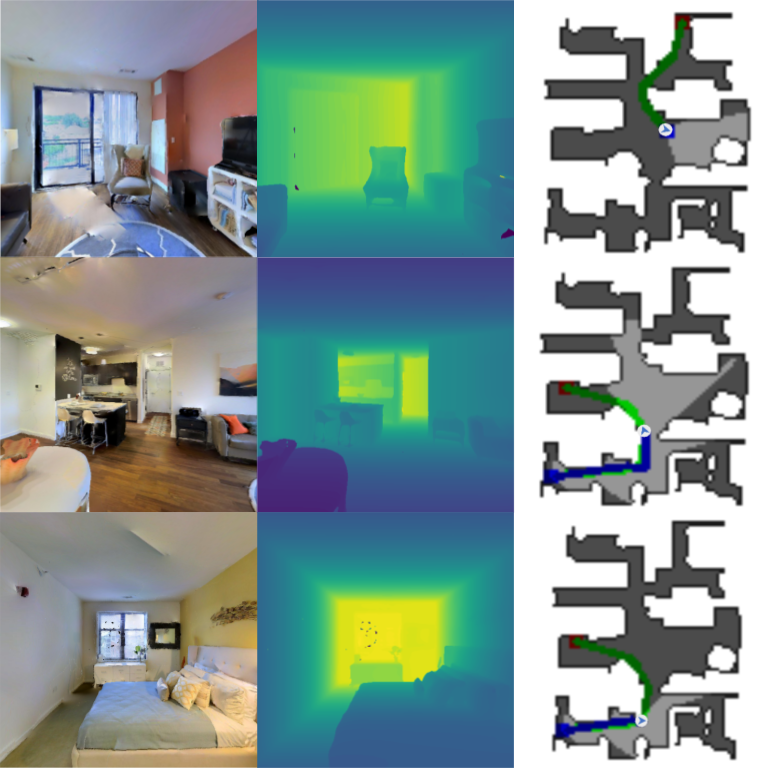

This projects implements detailed environment perception stack for self driving cars. A semantic segmentation output of an image computed using Convolutional Neural Network is used as an input to the Environment perception stack. This stack constitutes 3 important sub-stacks as follows:- Estimating the ground plane using RANSAC:To estimate the drivable surface for a car, pixels corresponding to the ground plane in the scene were computed. This extends to finding the pixels corresponding to the road class from the semantic segmentation output. A depth map was used to find the depth of each corresponding pixels belonging to the road class.

RANSAC is implemented to find the ground plane from the (x,y,z) coordinates of the pixels belonging to the road class. RANSAC was used for outlier rejection

RANSAC is implemented to find the ground plane from the (x,y,z) coordinates of the pixels belonging to the road class. RANSAC was used for outlier rejection - Lane Estimation using Semantic Segmentation output:

Pixels corresponding to lane markings and sidewalks are found by applying mask on the semantic segmentation output. Edge detection and Hough line estimation was used to estimate all lines in the image.

Redundant lines were merged and horizontal lines were filtered through slope thresholding. The final output is as follows:

Redundant lines were merged and horizontal lines were filtered through slope thresholding. The final output is as follows:

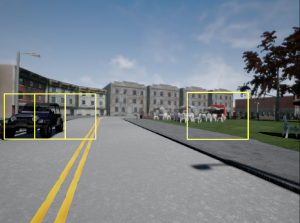

- Computing minimum distance to impact:2D object detection output was used to compute the minimum distance to impact. Since this output is from a high recall and low precision 2D object detector, semantic segmentation output was used to compute valid bounding boxes.

Unreliable detections were filtered out by computing the number of pixels in the bounding box belong to the category predicted by CNN from the semantic segmentation output. If the ratio of number of pixels to the area of total bounding box is below a certain threshold, the bounding box is removed. Final output is as follows:

Unreliable detections were filtered out by computing the number of pixels in the bounding box belong to the category predicted by CNN from the semantic segmentation output. If the ratio of number of pixels to the area of total bounding box is below a certain threshold, the bounding box is removed. Final output is as follows: Minimum distance to impact is calculated using root of sum of squares of x,y,z coordinates and computing the minimum of the output array

Minimum distance to impact is calculated using root of sum of squares of x,y,z coordinates and computing the minimum of the output array

RANSAC is implemented to find the ground plane from the (x,y,z) coordinates of the pixels belonging to the road class. RANSAC was used for outlier rejection

RANSAC is implemented to find the ground plane from the (x,y,z) coordinates of the pixels belonging to the road class. RANSAC was used for outlier rejection Redundant lines were merged and horizontal lines were filtered through slope thresholding. The final output is as follows:

Redundant lines were merged and horizontal lines were filtered through slope thresholding. The final output is as follows:

Unreliable detections were filtered out by computing the number of pixels in the bounding box belong to the category predicted by CNN from the semantic segmentation output. If the ratio of number of pixels to the area of total bounding box is below a certain threshold, the bounding box is removed. Final output is as follows:

Unreliable detections were filtered out by computing the number of pixels in the bounding box belong to the category predicted by CNN from the semantic segmentation output. If the ratio of number of pixels to the area of total bounding box is below a certain threshold, the bounding box is removed. Final output is as follows: Minimum distance to impact is calculated using root of sum of squares of x,y,z coordinates and computing the minimum of the output array

Minimum distance to impact is calculated using root of sum of squares of x,y,z coordinates and computing the minimum of the output array